IHPC Tech Hub

Discover the power of computational modelling, simulation and AI that brings about positive impact to your business.

- Health & Human Potential

- Manufacturing & Engineering

- Smart Nation & Digital Economy

- Transport & Connectivity

- Urban Solutions & Sustainability

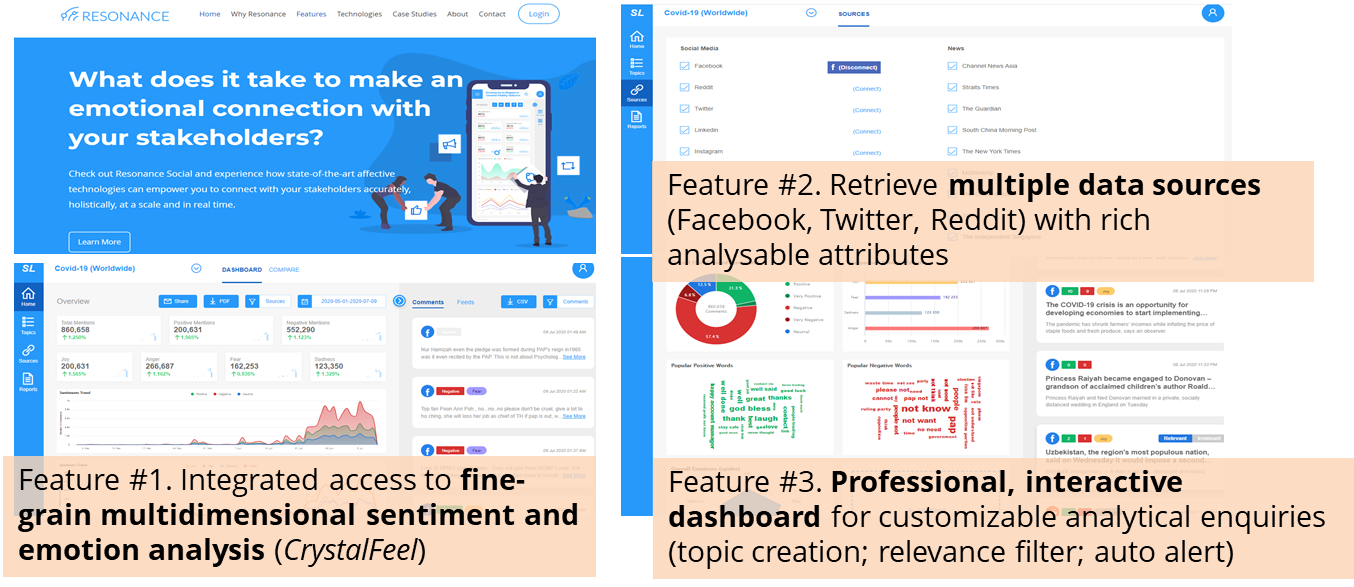

Resonance Social - Advanced Social Listening Platform

Resonance Social is an advanced social listening platform system featuring end-to-end multisource social data acquisition, integrated emotion information extraction and interactive visualization. It is developed by engineers and scientists studying Affective and Social Intelligence from A*STAR’s Institute of High Performance Computing since 2019 to present time.

Existing social media monitoring and analytic systems and services are limited in three key dimensions to realize the full potential of user values. Resonance is developed to address the three key problems faced in today’s social listening and media intelligence landscape.

First and foremost, natural language processing (NLP) engines used underneath existing social analytic services are typically developed based on the concept of discrete, categorical sense of emotions (happy vs. not happy, sad vs. not sad, angry vs. not angry) and of sentiments (positive, negative, neutral). Therefore, limited actionable values can be derived as analysts and end users will have limited insights of the underlying emotional drives (e.g., anger, fear, and sadness are different negative emotions) and the degree of the intensities (e.g., low, medium, high) associated with the emotions. Integrated ability to extract and present emotional intensity information is non-existent.

Second, while the field of NLP and computational linguistics is moving fast, without holistic access to multiple media sources, it will not create user value for social adoption due to the moderate to high barrier to have in-house tech-savvy personnel to configure various data APIs. APIs by major social media platforms are constantly changing its terms of use and technical configurations, and it takes significant data engineering efforts to build such connections before valuable emotional information can be reliably and timely extracted.

Third, to facilitate the conduct of valuable analytic enquiries such as comparing the trend of changes of market sentiment, public responses and brand health risks, users without the interactive dashboard will not be able to discover the dynamic insights present by the rich parameters of the emotional contexts.

Features

- Provide accurate, nuanced and comprehensive insights of the emotional content from social data: Integrated access to IHPC’s state-of-the-art multidimensional emotion analytic engine CrystalFeel

- Provide holistic, side-by-side insights from multiple media sources: Integrated data access to Facebook, Twitter, Reddit and more through APIs

- Provide professional, customisable dashboard for timely and robust analytic insights: Interactive visualisation end-user dashboard functions with topic self-configuration, automatic reporting, contextual relevance filtering

The Science Behind

Resonance Social is developed with a focus of providing multi-user based, industry-strength and customizable platform for advanced social listening. Resonance leverages CrystalFeel multidimensional emotion analysis technology at the heart of its processing engine, coupled with built-in API access to multiple data sources (Facebook and Reddit), and a suite of useful analytic dashboard components: overall statistics, temporal visualisation of time-series data of sentiment and emotions, emotion-level word clouds, source data csv download, interactive navigation of search phrases as well as flexible topic design and creation from Resonance’s master social media database.

Industry Applications

Resonance Social enhances CrystalFeel with its ability to integrate real-time data stream and visualization, focusing on Facebook and Reddit platforms, and professional, industry-strength dashboard social analytic interfaces. It is being used for a variety of advanced social listening applications, including but not limited to:

- Ground sensing: Understand commuter experiences in real time and inform policy evaluation and calibration

- Disease surveillance and management: Timely and wide range surveillance of social media indicators of infectious disease development

- Mental wellbeing: Early community sensing of threats and signs of wellbeing issues & enable more effective healthcare resources allocation

Visit Resonance.

For more info or collaboration opportunities, please write to enquiry@ihpc.a-star.edu.sg.

A*STAR celebrates International Women's Day

From groundbreaking discoveries to cutting-edge research, our researchers are empowering the next generation of female science, technology, engineering and mathematics (STEM) leaders.

Get inspired by our #WomeninSTEM

.png?sfvrsn=ff199933_15)